Most Viewed Articles

- Blogs >

Regression and Classification

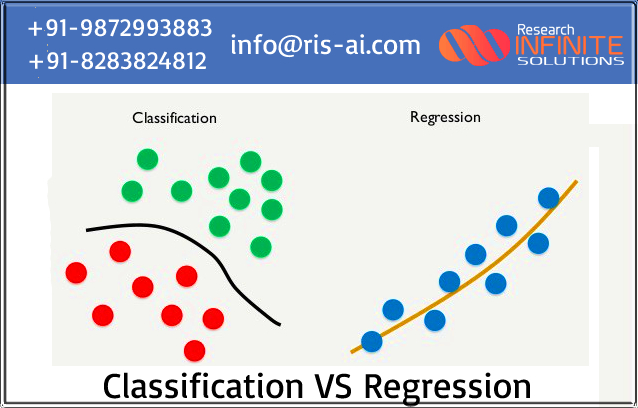

Difference between Regression and Classification.¶

The main difference between Regression and Classification algorithms that Regression algorithms are used to predict the continuous values such as salary, age, etc. and Classification algorithms are used to classify the values such as Male or Female, True or False, Spam or Not Spam etc.

Regression:¶

As I start the regression first i go through the overview of regression like definition, advantages, different technique of regression. So I start with overview.. ¶

Definition: Regression is a method to analysis the set of statistical processes for estimating the relationships between a dependent variable and one or more independent variables. when we want to predict a continuous dependent variable from a number of independent variables we use regression analysis ¶

Advantages: ¶

- Predict sales in the near and long term.

- Understand inventory levels.

- Understand supply and demand.

- Review and understand how different variables impact all of these things

Regression method of forecasting involves examining the relationship between two different variables, known as the dependent and independent variables. ¶

Types of Regression:¶

- Linear regression

- Logistic regression

- Ridge regression

- Polynomial regression

#### After this I generally go through 3 types of regression Linear, Logistic and

Polynomial regression.

Linear Regression comprises a predictor variable and a dependent variable

related

to each other in a linear fashion. Logistic Regression uses a sigmoid curve

to show the

relationship between the target and independent variables. However, caution should be

exercised: logistic regression works best with large data sets that have an almost equal

occurrence of values in target variables.

Polynomial Regression models a non-linear dataset using a linear model. It is the equivalent of making a square peg fit into a round hole. It works in a similar way to multiple linear regression (which is just linear regression but with multiple independent variables), but uses a non-linear curve.

After that I perform Simple Linear Regression : simple linear regression is a linear regression model with a single explanatory variable.That is, it concerns two-dimensional sample points with one independent variable and one dependent variable (conventionally, the x and y coordinates in a Cartesian coordinate system) and finds a linear function (a non-vertical straight line) that, as accurately as possible, predicts the dependent variable values as a function of the independent variable. The adjective simple refers to the fact that the outcome variable is related to a single predictor.

I perform Linear Regression on a simple dataset of random number and also calculate the constrain value that is =|yi-wixi| where yi is target value ,wi is cofficient and xi is predictor. And also calculate max and min Illustrutive that is wixi+eps (max illustrutive) wixi-eps (min illustrutive)

After that I performed Vector Regression on Life expectancy dataset to predict life expectancy. So vector regression are supervised learning models with associated learning algorithms that analyze data for classification and regression analysis. and I used Sklearn to import SVM after that I analyse the dataset and get information about dataset and apply vector regression by fitting the dataset values and plot different graphs for analyse the predicted values.

After that I perform various type of regression on same dataset that are Follow: - Random forest regression

- Catboost regression

- XgBoost regression

- LightGBM regression

- Decision Tree

So before performing these types of regression I go through the defination of these

regression:

1. Random Forest Regression is a supervised learning algorithm that uses ensemble learning method for regression. Ensemble learning method is a technique that combines predictions from multiple machine learning algorithms to make a more accurate prediction than a single model. A Random Forest operates by constructing several decision trees during training time and outputting the mean of the classes as the prediction of all the trees.

2. Decision tree builds regression or classification models in the form of a tree structure. It breaks down a dataset into smaller and smaller subsets while at the same time an associated decision tree is incrementally developed. The final result is a tree with decision nodes and leaf nodes.

3. XGBoost is an efficient implementation of gradient boosting that can be used for regression predictive modeling.XGBoost is a powerful approach for building supervised regression models. The validity of this statement can be inferred by knowing about its (XGBoost) objective function and base learners.

4. CatBoost is a recently open-sourced Machine Learning algorithm from Yandex. It can easily integrate with deep learning frameworks like Google’s TensorFlow and Apple’s Core ML. It can work with diverse data types to help solve a wide range of problems that businesses face today. To top it up, it provides best-in-class accuracy.

5. LightGBM extends the gradient boosting algorithm by adding a type of automatic feature selection as well as focusing on boosting examples with larger gradients. This can result in a dramatic speedup of training and improved predictive performance.

After that I perform the various operation on life expexctency dataset to predict the life expectancy in next years and Perform Regression Algorithms to predict and classified the data and also claculate various Mean Absolute/Squared Error to check the data consistancy.

Types of classification: ¶

After that I perform various classification on dataset to classify the dataset

Classification refers to a predictive modeling problem where a class label is predicted for a given example of input data. Examples of classification problems include: Given an example, classify if it is spam or not. Given a handwritten character, classify it as one of the known characters. ¶

Decision Tree Classification : it build Decision tree builds classification or regression models in the form of a tree structure. It breaks down a dataset into smaller and smaller subsets while at the same time an associated decision tree is incrementally developed. ¶

- Perform Decision Tree Classifier algorithm that create a training model that can use to predict the class or value of the target variable by learning simple decision rules inferred from prior data or training data

- Perform XGBoost regression that is a scalable and accurate implementation of gradient boosting machines and it has proven to push the limits of computing power for boosted trees algorithms

- Perform GBM algorithm ( Gradient Boosting Machine Algorithm) that used to perdict the most accurate values and this algorithm can be used for predicting not only continuous target variable but also categorical target variable (classifier values)

- Perform Light GBM Algorithm that is a fast, distributed, high-performance gradient

boosting framework based on decision tree algorithm, and used for ranking,

classification and many other machine learning tasks.

After that I Perform classifier and regresser Decission tree technique and their operation and learn how decision tree classified the data after that Analyse the Breast Cancer dataset and test and train that dataset.

After that I Perform Classification on Breast cancer dataset (Decision tree classification, RandomForestClassification, CatBoost classification ) and their operation after that perform opencv operation on image dataset to color converter(RGB to Gray) and wraping of an image and face detection.

After that I Perform classification method on image dataset to predict the best accuracy score and learn What is Image Classification and its use cases so Image Classification means assigning an input image, one label from a fixed set of categories and it is uses such as medical image analysis, identifying objects in autonomous cars, face detection for security purpose, etc. After applying different classification method I discovered tha SVM Classifier having best accuracy score and confusion matrix

Popular Searches

- Thesis Services

- Thesis Writers Near me

- Ph.D Thesis Help

- M.Tech Thesis Help

- Thesis Assistance Online

- Thesis Help Chandigarh

- Thesis Writing Services

- Thesis Service Online

- Thesis Topics in Computer Science

- Online Thesis Writing Services

- Ph.D Research Topics in AI

- Thesis Guidance and Counselling

- Research Paper Writing Services

- Thesis Topics in Computer Science

- Brain Tumor Detection

- Brain Tumor Detection in Matlab

- Markov Chain

- Object Detection

- Employee Attrition Prediction

- Handwritten Character Recognition

- Gradient Descent with Nesterov Momentum

- Gender Age Detection with OpenCV

- Realtime Eye Blink Detection

- Pencil Sketch of a Photo

- Realtime Facial Expression Recognition

- Time Series Forecasting

- Face Comparison

- Credit Card Fraud Detection

- House Price Prediction

- House Budget Prediction

- Stock Prediction

- Email Spam Detection